2.7 KiB

This page is written directly in Markdown - one file for the body, plus one for the header and one for the footer.

For each HTTP request your browser makes to Markdown@Edge, a Fastly server makes

checks its cache for the relevant Markdown files, and either uses the cached

files as-is or makes a request to my web server at nora.codes to retrieve them.

The WebAssembly code running on that server, compiled from a single Rust file,

then combines them, parses the resulting string as Markdown, and uses a

streaming event-based renderer to convert that Markdown to HTML.

The only dependency of the service, other than the Fastly SDK,

is Raph Levien's pulldown-cmark.

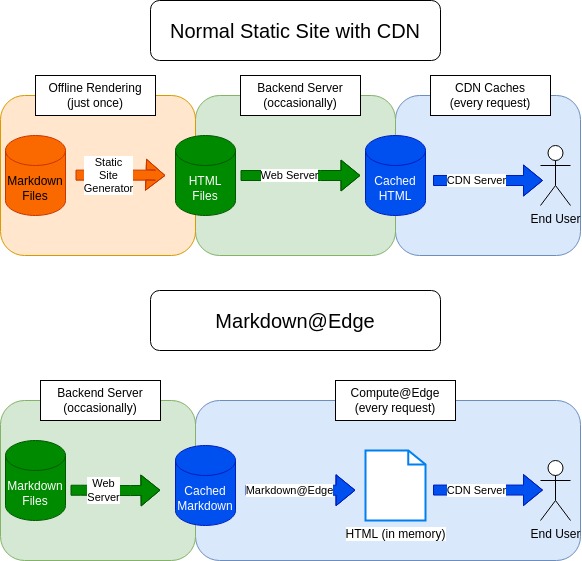

In a normal website written with Markdown, this rendering process is done just once, when converting source Markdown files into the HTML files that are actually served to your browser. In this case, the rendering has been moved "to the edge" and is running on a server that is probably physically much closer to you than my webserver is.

The renderer takes advantage of the fact that Markdown allows embedding raw HTML by embedding anything with a "text" MIME type in the Markdown source, while passing through anything without a "text" MIME type - images, binary data, and so forth - unchanged. That allows the above JPG image to embed properly, while also allowing the linked SVG image page to be rendered as a component of a Markdown document.

Why?

I work on the Compute@Edge platform and wanted to get some hands on experience with it. This is not a good use of the platform for various reasons; among other things, it buffers all page content in memory for every request, which is ridiculous. A static site generator like Hugo or Zola is an objectively better choice for bulk rendering, while the C@E layer is better for filtering, editing, and dynamic content.

That said, this does demonstrate some interesting properties of the C@E platform. For instance, the source files are hosted in a subdirectory of my webserver; in theory, you could directory-traverse your way into my blog source, but in fact, you can't. I also have a Clacks Overhead set for my webserver, and the C@E platform is set up to make passing through existing headers trivial even with entirely synthetic responses like these, so that header is preserved.